Something interesting has been happening in the AI community.

Developers, hobbyists, and independent builders have quietly been buying up Apple Mac mini machines — not for traditional desktop use, but as compact AI servers.

Why?

Because the modern Mac Mini — especially Apple Silicon models — hits a rare balance:

- Unified memory architecture

- Efficient power consumption

- Silent 24/7 operation

- Surprisingly strong local inference performance

- Small physical footprint

In an era dominated by cloud AI, the Mac Mini has become the “AI box” of choice for people who want control — control over compute, data, cost, and experimentation.

And that’s exactly why I built mine.

Why a Home AI Lab Makes Sense in This Season of My Life

I’m transitioning into consulting and semi-retirement. That means two things:

- I don’t want to stop building.

- I want control over how and what I build.

For decades I operated inside enterprise systems — DevOps pipelines, observability stacks, Kubernetes clusters, regulated financial environments.

Now?

I get to design the lab exactly how I want it.

A home AI lab lets me:

- Experiment without corporate constraints

- Test AI-native workflows

- Prototype consulting frameworks

- Automate parts of my own career transition

It’s not just tinkering.

It’s infrastructure for the next phase of my professional life.

The Stack: Local Models, Real Work

At the core of my lab is Ollama.

Ollama allows me to run large language models locally — no API calls, no cloud dependency, no data leaving my machine. I’m currently running models like Llama 3 and embedding models for semantic search.

That alone changes the equation.

But the real fun begins when you introduce agents.

Enter OpenClaw.

OpenClaw allows for autonomous workflows — agents that can reason, act, fetch data, and iterate.

That’s where this moved from “cool experiment” to “practical automation.”

Teaching the Lab Who I Am

One of my first real use cases?

Training the system on my own career.

I loaded:

- Multiple versions of my resume

- Historical role descriptions

- Leadership summaries

- Technical impact statements

- Target job postings

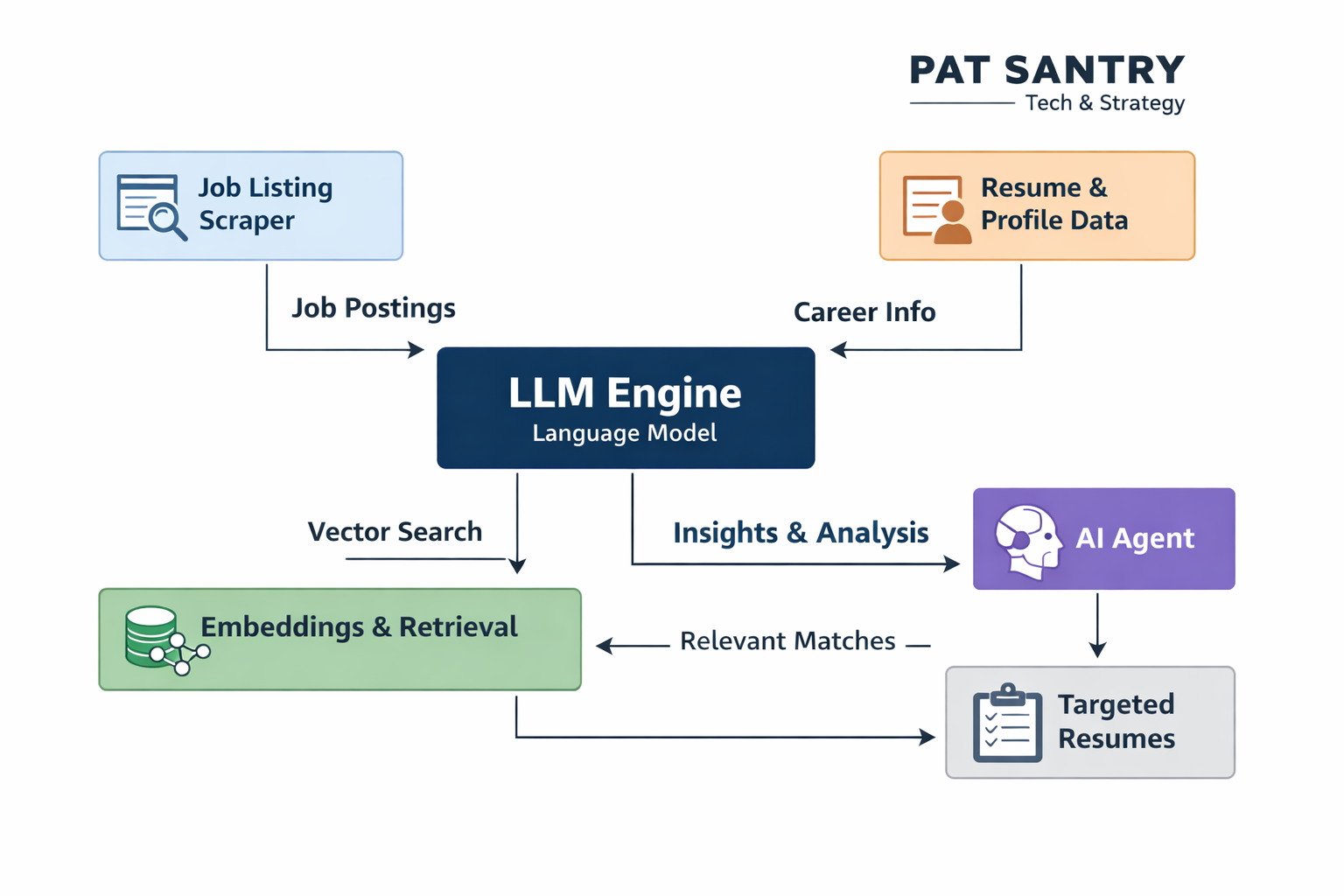

Using local embeddings and retrieval (RAG-style workflows), I began building a system that:

- Understands my professional narrative

- Compares it to live job descriptions

- Identifies semantic alignment

- Generates a targeted resume for that specific role

Not a generic AI resume.

A structured, tailored, optimized document aligned to the language of the job.

As someone pivoting toward consulting, fractional CTO work, and strategic advisory roles, this matters.

Because positioning matters.

From Scraping to Targeting

The next evolution is workflow automation:

- Pull job listings from selected platforms

- Extract skills and requirement signals

- Rank them against my profile

- Flag high-probability matches

- Generate targeted resumes and cover letters automatically

This is essentially building my own:

AI-driven career agent.

Not dependent on a SaaS platform.

Not tied to someone else’s subscription tier.

Running on my hardware.

That’s powerful.

Why Local AI Changes the Mindset

There’s something deeply satisfying about owning the compute layer.

In enterprise environments, we talk about:

- Infrastructure as Code

- Platform Engineering

- DevOps autonomy

Running local AI is the personal version of that.

It’s edge computing for the individual.

It also fits this season of life perfectly.

I’m no longer climbing corporate ladders.

I’m designing systems for myself.

Systems that:

- Help me consult smarter

- Help me write better

- Help me position strategically

- Help me think more clearly

The Mac Mini isn’t just hardware.

It’s a laboratory for reinvention.

What This Becomes

Today it’s resume targeting.

Tomorrow it could be:

- AI-assisted memoir refinement

- Automated blog ideation pipelines

- Consulting proposal generators

- Local observability + AI experimentation

- AI-native DevOps frameworks

This lab isn’t about novelty.

It’s about building capability before the next wave fully crests.

Just like the early web days.

And I’ve seen that movie before.