By now it’s becoming clear that artificial intelligence raises some interesting philosophical questions.

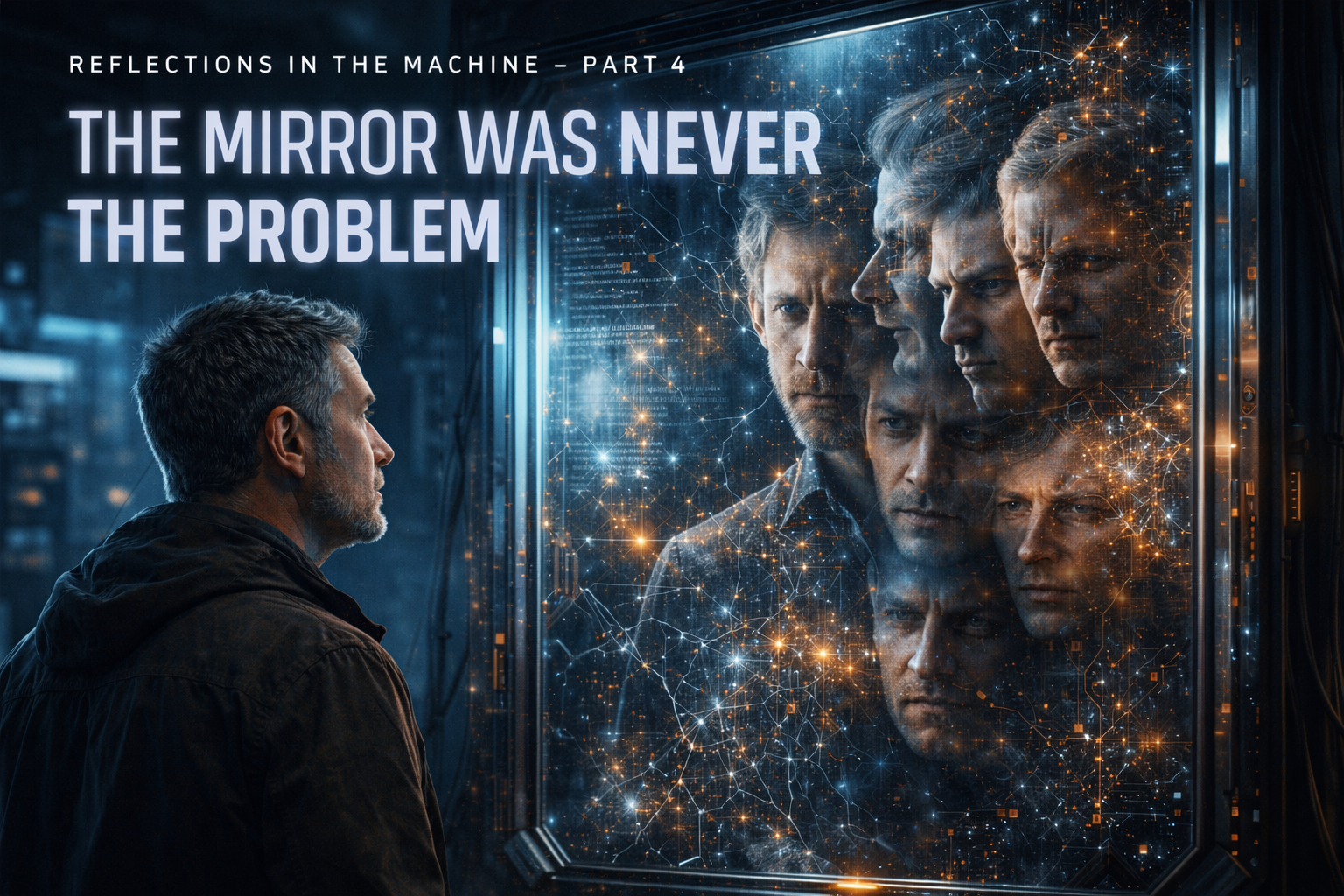

In this series we’ve explored the idea that AI can behave like a mirror. The more context you give it, the more clearly it reflects patterns in human thinking.

We also looked at what happens when the mirror begins participating in the conversation.

And what might happen someday if the mirror stops reflecting entirely.

Those are interesting questions.

But there’s another one that may matter more.

Is the technology actually the problem?

Every time a powerful new tool appears, the first reaction is usually fear. People assume the danger must come from the technology itself.

History suggests something different.

Fire didn’t introduce destruction to the world. Humans were already capable of that.

The printing press didn’t invent misinformation. It simply allowed ideas to travel faster.

The internet didn’t create human conflict. It just made those conflicts visible to everyone.

Technology rarely creates new human behavior.

It amplifies what is already there.

Artificial intelligence may follow the same pattern.

If someone approaches AI with curiosity, the system becomes a tool for exploration. If someone approaches it creatively, the system becomes a tool for building new ideas.

But amplification works both ways.

Bias can be amplified.

Manipulation can be amplified.

Pride can be amplified.

The machine simply scales the signal.

That’s why discussions about the “danger of AI” sometimes miss the deeper point.

The real variable isn’t the machine.

It’s the human operating it.

Technology has always acted as a magnifier for human nature. The more powerful the technology becomes, the more clearly it reveals the character of the people using it.

In that sense AI might not introduce a new problem at all.

It might simply expose an old one.

Because if a machine begins reflecting our thinking with perfect clarity, the uncomfortable possibility is this:

The danger may never have been the technology.

The danger may have always been us.